History:Change Default Data Quality Proposal

| Status: This page describes an active proposal and is not official. |

|

|

Raw numbers: Change Default Data Quality Proposal/Raw Numbers

Please note that the data quality setting implies nothing about the musical quality of the artist or the release, nor does this proposal imply any denegration of the editing abilities of the editor who creates or edits a release.

Proposer: BrianFreud

The Problem

Problem 1: Too many open edits.

Problem 2: Too few voters voting on those edits.

Problem 3: Too many inexperienced editors editing without knowledge of the relevant style guidelines.

Result 1: Too many "add release" edits slipping into the database without having been voted in or out.

Result 2: Too many other edits to releases slipping in without proper vetting, allowing for releases to slowly "degrade" in overall quality as time passes.

Currently, all releases enter the database at normal quality.

- All added releases enter the database, if not voted down.

- All edits to releases are applied, if not voted down.

- All ARs attached to edits are applied, if not voted down.

This proposal attempts to address some of these problems by making two changes in how quality levels for releases work.

Data Quality

From DataQuality:

The data quality idea has the following goals:

- Establish a method to determine the quality of an artist and the releases that belong to that artist. This provides consumers of MusicBrainz a clue about the relative quality rating of the data in the database.

- Provide fine grained control over what efforts are required to edit the database and to vote on those edits.

- Provide editors with a means to allow easier editing of data that is deemed to be of poor quality.

- Provide editors with a means to make it harder to edit data that is considered to be of good quality.

- Reduce the overall number of edits in the system by making the requirements to pass an edit suited for each edit type.

The Numbers

Currently, as mentioned, a release enters at normal quality, whether it was voted in or not. It then can be manually edited to low quality, but this is not frequently done, and adds additional open edits.

First, why do we even care about the DataQuality setting for a release?

As of June 17, 2007, we have 490,844 releases in the database. This number has been increasing recently at between 70 and 300 releases each day.

As of June 17, 2007, we have 2,508 open edits for "add release" edits.

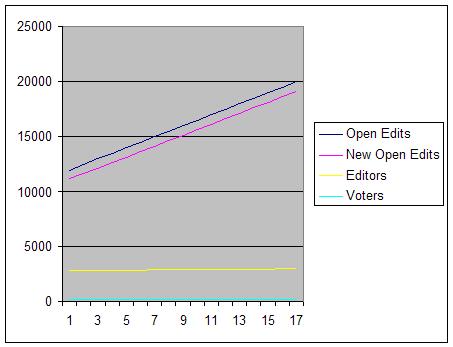

As of June 17, 2007, we have 12,086 open edits, with an average open edits at any one time tending to be closer to 14,000 to 15,000. This number also has been increasing rather steadily, and when the various stages of NextGenerationSchema begin to occur, just as when AdvancedRelationships were introduced, we can expect the number of open edits to again increase significantly.

Finally, again as of June 17, 2007, while 2,620 editors were actively editing in the past two weeks, less than 10% (210) voted on any edits.

All of this means that the database is growing larger at an ever increasingly quick rate. The number of open edits continues to grow. However, the number of voters voting on these edits is not growing. During any recent two week period, the number of voters has remained rather steady, at somewhere between 200 and 225 active voters.

The simple answer would seem to be to get more editors voting as well as editing. Though this would be the best answer, we must be realistic. It's not going to happen in the kinds of numbers we need. As the database has grown and developed, the number of voters has grown slowly, but the ratio of active editors to voters has remained steadily at less than 1:10. This also assumes that every active voter is qualified to vote on every edit. For more specialized releases - classical, Japanese anime, Hebrew script, etc - the number of voters who are qualified to fix style issues or catch errors, is much more limited. As MusicBrainz grows in size and prominence, this will become ever more a problem, as releases are added languages or scripts that no current active voter is qualified to address, let alone three such voters.

The Proposal

This proposal comes in four parts.

Terminology clarification: The term "Voted" here also includes "auto-applied" edits by auto-editors.

1. To address various concerns that came up when this initially was discussed on the users mailinglist, the three DataQuality levels are renamed.

- "Low Quality" is renamed to "Unverified"

- "Normal Quality" is renamed to "Verified"

- "High Quality" remains unchanged.

2. A release can enter the database two ways:

- a. It is voted in.

- b. It is not voted in.

In case a, the release would enter the database, as it does currently, with the quality level set to "Verified".

In case b, the release would enter the database with the quality level set to "Unverified".

This would solve several problems.

Especially for case b releases, Releases typically are in the worst shape when they first enter the database. Over half the time, no verification url is provided. We don't even know for certain that these exist. Frequently they are duplicates. Classical releases that don't follow the ClassicalStyleGuidelines. Various Artist releases have artist names in the tracks. Releases/tracks are attributed to the wrong artist. The proper capitalization standards often haven't been followed. Official releases are set to Pseudo-Release. Typos are frequent. Script and language haven't been set for the release. Etcetera.

- By setting these to Unverified status, we indicate all these issues. Also, for the editor who chooses to try and clean one of these up, there is a secondary benefit. Edits for releases at the lowest quality setting only take 4 days to expire, rather than the 14 days it takes for edits to releases at the middle quality setting. The cleanup edits required for these, even if unvoted, will take effect much faster, improving the quality of the release and decreasing the number of open corrective edits. The release, then cleaned up, can then have a single edit placed to raise the quality level to "Verified". Though this then does add one vote per release to the number of open edits, the number of open corrective edits being passed much more quickly from the database ought to significantly outweigh the single additional quality edit.

In this way, rather than unverified releases entering the database as normal quality, only for us to work backwards, placing edits to lower the quality level on unverified releases, we instead can edit in a progressive manner, moving from the lowest quality level to a more verified position with regards to the veracity of the listing.

3. The More Complex Problem

There is a secondary problem related to the number 2. Assuming we have a perfectly good, verified release, we still have the other 85% of edits which are not being fully voted or vetted. These are all the non-add release edits. The basic "shell" of the release (the artist, tracklist, and title) may be correct at first, but the overall quality may degrade as edits occur and ARs are added. ARs may link to the wrong artists, composers, etc. At this point, though the shell is correct, the rest is unverified. A small handful of incorrect ARs or edits may not make a release wrong, but at some point, I think we can all agree, the total information collected for that release has returned to an unverified state.

This part of the proposal suggests that a counter be used to count the number of edits applied to a release without being voted on. "High quality" releases are protected from this problem, as the default for unvoted edits at "High quality" is that those edits fail. For edits at "Verified" or "Normal quality", however, the default is for those edits to pass. This part of the proposal suggests that, when this counter of unvoted edits reaches a certain number, the quality level on the release automatically drop back to "Unverified". This does add one open edit to the number of open votes, to return these back to "Verified" status, but it would seem to be a vote that ought to occur anyhow on these releases. The entirety of the release may have 100 ARs attached, applied without a vote, but at least they will be verified as a group by the edit to return the release to a "Verified" state.

The question that remains unaddressed here is at what number the counter ought to be set such that releases do have this action occur. I suggested 5 in the preliminary RFC on the users list, and that number wasn't challenged.

4. When a vote successfully raises a release to either "Verified", the counter for #3 above is reset to zero.

Notes from the preliminary RFC on the users mailing list

1. Some took offense that their add release edits would seem "low quality". Again, this proposal only states the verified or unverified status of a release, and that status may be corrected by a single raise quality edit. It is hoped that the terminology change proposed in #1 also better clarifies that this is really a question of verification, not quality.

2. Some were concerned that this would mean an ever increasing amount of the database is in a "unverified" state. I would suggest, first, that this already is the case - we just don't accurately reflect the fact. Second, with the corallary (and, theoretically, significant) decrease in open corrective edits waiting in the system, even if we continue to have only a small number of voters, the ratio of voters to open edits ought to raise enough that at least fewer releases, if not massively fewer, entering at the unverified state.

Some quick numbers to see why this problem, left unaddressed, will become progressively worse

If we assume 2000 of the "edit x" edits currently open are "corrective" edits for releases unfixed since they first were entered, changing from 14 to 4 days for these to pass would mean that of the 12,000 edits currently open, that number would decrease by about 7.1%. Assumptions: for every 1000 edits added to the avg open edits, we get 10 new editors and 1 new voter. (Actually this just about the ratio we have been averaging according to the server statistics.)